With AI adoption rising, experts warn employers against ‘muddling through’ without clear rules

New Canadian surveys revealed that Canadian employees are continuing to adopt AI tools en masse, but without clear governance or rules from their employers.

The report, KPMG’s Generative AI Adoption Index, shows that more than half of Canadian workers are now using these tools on the job, with most reporting productivity gains. However, employers are not seeing the ROI they were hoping for: although 93 percent of Canadian enterprises adopted generative AI, only two percent say they’ve seen returns.

Contracting, vendors and ‘shadow use’ of AI

KPMG’s findings show AI tools are being used daily and weekly in workplaces, which often means both formally deployed systems and employee-chosen tools accessed online.

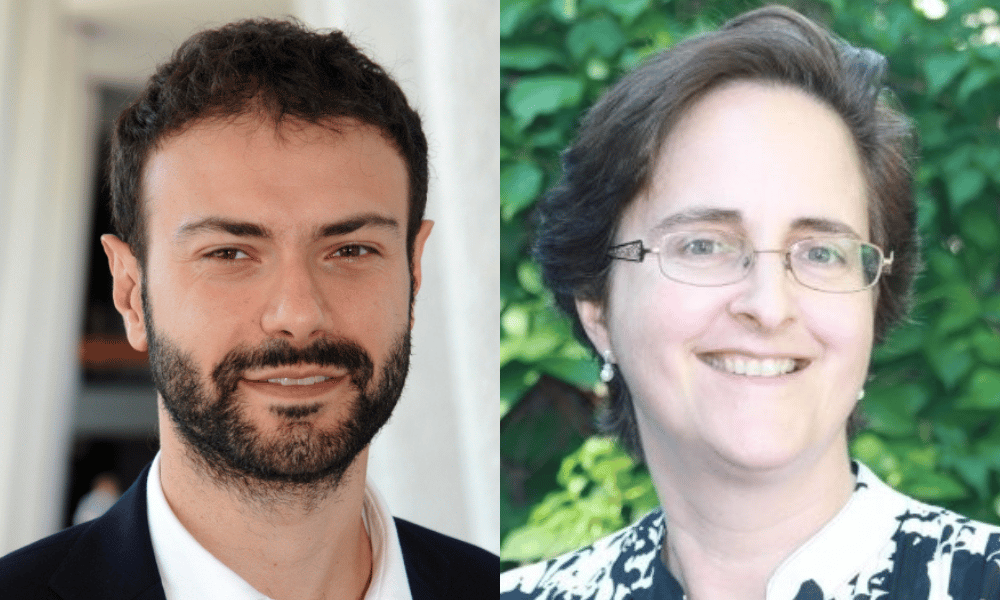

Teresa Scassa, Canada research chair in information law and policy at the University of Ottawa Faculty of Law, says employers need to think on two levels: official tools brought in through procurement and “shadow” use by staff.

On the procurement side, Scassa stresses that employers must align contracts and configurations with their legal and security obligations.

“The employer needs to … make sure that the tools that are available within the office officially are compliant with both legal requirements, but also the needs of the business for confidentiality or privacy protection and so on,” she says.

“The next level, of course, is to address this issue of shadow use as part of a broader employment policy that ensures that when employees use AI technologies, they're doing it consistently with the legal and ethical obligations of the company.”

This could mean an outright ban on shadow AI use, she says, but governance can’t stop there – it also has to cover how employees are allowed to use AI, and under what conditions.

Scassa says employers must “address this issue of shadow use as part of a broader employment policy that ensures that when employees use AI technologies, they're doing it consistently with the legal and ethical obligations of the company.”

She notes that in some workplaces this may be a limited concern, but in others it is central to managing risk and confidentiality.

Canadian AI privacy law and real-world mishaps

KPMG’s report points to widespread AI use by employees, including for tasks like drafting and research, which can involve personal or sensitive information.

Scassa emphasizes that Canadian privacy law already sets meaningful constraints on how personal information can be handled, regardless of whether an AI system is involved. Where employers need to be careful, she warns, is when employee personal data “escapes the fold.”

To illustrate how seemingly helpful tools can go wrong, she points to corporate meetings where AI note‑taking or transcription services can capture more than intended.

“The board goes ‘in camera’ for some more sensitive discussions, and then at the end of the meeting, the AI tool sends the transcript to absolutely everybody who is at the meeting and on the agenda for the meeting,” she outlines, noting a real-world example involving an Ontario doctor’s note-taker AI tool that breached patient privacy.

The doctor in question had set up a note-taker to join his meetings and take notes – problem was, not only did he use his personal email address to set up the account, which was against hospital policy, he also failed to cancel the bot when he left that hospital. The tool continued accessing former colleagues’ meetings – including patient visits – through calendar meeting invitations, unbeknownst to anyone.

“This obviously is a problem. There's just a range of different challenges that come up, some of which laws already applied.”

AI policies and employer risk in Canada

Valerio De Stefano, law professor at York University’s Osgoode Hall Law School and Canada research chair in innovation, law and society, outlines the range of risk for employers and AI use.

He explains that employer understanding of the purpose and data flows of any AI system being used by employees is essential.

“The caution is always to try and understand what they need this technology for, but also what kind of data the technology processes and collects, and especially when the technology is used by employers to complement their decision-making processes,” De Stefano says.

“For instance, when the technology is used to track the productivity of someone, employers should always be aware that these technologies are never one hundred percent.”

De Stefano notes that many AI tools are integrated into workplaces as generic, off-the-shelf products, with little tailoring to specific workflows or jobs. In many cases, he says, technology is purchased and implemented without full understanding of the actual workflows it is meant to assist.

Not only affecting productivity, these circumstances can cause ruptures at higher levels, he adds: “The technology can actually be misleading.”

Workplace AI policies should focus on bias

KPMG’s index indicates that AI use is spreading quickly among Canadian employees, but that does not guarantee outcomes are fair, accurate or compliant with Canadian legal standards.

De Stefano stresses that AI used in hiring or employee monitoring is an especially vulnerable point for employers as the practice can not only raise occupational health and safety issues, but human rights concerns as well.

“It is always important when a new technology is implemented in the workplace, to understand why that's the case,” he stresses.

“What does the employer intend to achieve with this new technology? But most importantly, it's essential to understand whether this technology, no matter what the sellers and providers of this technology say, is actually able to do what is marketed for.”

From his perspective, one of the most practical safeguards employers can build into their approach is structured dialogue with workers before and during adoption.

This includes sharing information about what a system does, what data it uses and how outputs will be applied in day-to-day decisions. Dialogue can also work to counterbalance selling pressure from third party vendors, he adds.

“It's always important for employers not to fall for the easy rhetoric that many tech companies are putting forward,” he says.

“The good way of understanding that is to have a conversation with the workforce … to have some form of collective discussion.”

AI employee performance tracking and legal risks

Beyond hiring, De Stefano flags productivity-tracking systems that standardize expectations around a narrow “ideal” worker profile, as these tools may not account for varying factors that can impact workflows, leading to unintentional bias or discriminatory results.

“When technology is used to track the productivity of people, the standards, the benchmarks that this technology will use will probably be tailored upon a certain ideal, typical worker,” he explains.

“This does not take into account disability, parental needs, many other reasons for accommodating a different productivity expectation or standard.”

Employee privacy remains relevant in this context, he adds, explaining that Canadian laws around AI currently lag behind other jurisdictions.

This can potentially lead employers to underestimate their responsibilities.

“Employers should not start from the assumption that the employees don't have any kind of actionable right to privacy at work, even when they are not clear standards,” he says.

Scassa notes that even where AI use is permitted by policy, failures in process or verification can cause significant reputational and financial damage.

She says “even if it's allowed by internal policies, those policies may require employees to check and verify information, and maybe that wasn't done, or they didn't have the appropriate mechanisms … that kind of thing can be really embarrassing within an organization.