'Workslop is what happens when employees use AI to offload work rather than as a collaborator to enhance workflows'

New research from Stanford University and BetterUp Labs has found that 40 percent of employees say they have experienced “workslop” in the last month, and it isn’t just the latest catchphrase — it’s a growing problem that is already costing employers about $US186 per month in wasted time.

Workslop – defined as “AI-generated work content that masquerades as good work” by the researchers – is now “ubiquitous," according to Fenwick McKelvey, associate professor of communication studies at Concordia University and co‑director of the Applied AI Institute.

“We have very few ways of effectively dealing with it, and we released the technology without any safeguards to prevent that,” McKelvey says, explaining that the pace and hype of AI advancement is leaving many employers behind the curve in their implementation of the tech, scrambling to catch up with their own policies and processes.

“It's not surprising that workplace slop is on the rise.”

It's not only employers responsible for AI use rules, though, McKelvey stresses. Canada's policy-makers and the slow pace of AI regulation is also a factor: “Most of our AI policy is really focused on the economic development angle, and less on some of the social impacts that we're seeing here.”

Not enough employer planning leads to AI workslop

The research, based on the responses of 1,150 full-time U.S. desk workers surveyed in September 2025, found that workslop costs an average 10,000-employee company US$9 million annually.

The way employers roll out technology matters – when done too quickly without enough forethought, McKelvey says, employees will use the tools however they work best for them. They can also use tools that don’t comply with employer policies where sanctioned ones fall short.

“This is certainly something that companies are dealing with, because employees are using open AI tools or AI tools without necessarily getting buy-in or acceptability,” McKelvey says.

“That's a big issue for any company, is what tools they're telling their employees to use, and what tools employees are using, and that creates all kinds of issues around what data is being exchanged, whether there's any confidential information being transmitted.”

What ‘good enough’ AI really costs

As the researchers explain, a large cause leading to the spread of workslop is optics. Workslop often looks like finished work, but doesn't stand up to scrutiny, such as long but unsubstantial reports, polished-looking presentations missing important information, or emails that seem professional but don’t make sense.

Because they pass an initial “glance test,” say the authors, workslop can spread quickly inside organizations.

Ozgur Turetken, professor of information technology management at Toronto Metropolitan University, says workslop may be only the tip of the iceberg, indicating bigger and more consequential impacts hiding below the surface.

“When we expect it to do everything, and some of it is done sloppily, there's a lot of extra effort to identify what part of it is good, and what part of what we're getting is not good,” Turetken says.

“And that's going to be a lot of waste of effort and time.”

For Turetken, the discussion around workslop should be less about the extra work required to fix it, and more about the workslop that gets missed: “Imagine a scenario where they don't even know.”

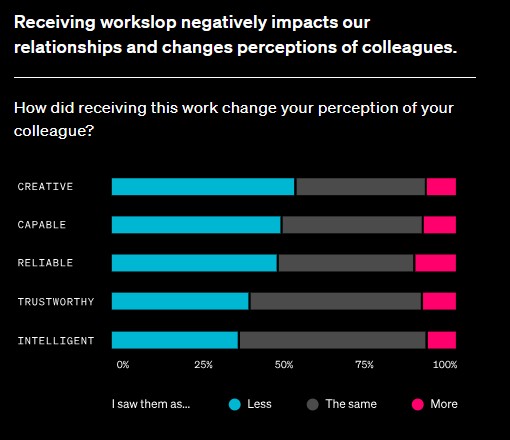

Source: BetterUp Labs

The numbers are not trivial. Employees can spend up to two hours dealing with each workslop instance, the U.S. research found. This includes engaging in “verification loops” as co-workers go back and forth in search of clarification.

“We take the genAI work as-is, and then try to make decisions based on it, or put things out there with our partners or customers or suppliers, and it's just all inaccurate,” Turetken says.

“That can cause real serious problems.”

Turetken points to these expanding verification processes as one way productivity gains disappear when teams start using genAI: “You just start drawing circles, trying to figure it out. And then after a while, you just realize 'Did that even save me any time? If I did everything from scratch, would it be better?'”

The long-term organizational risks of AI

McKelvey warns that over‑reliance on AI can lead to long-term, existential risks for organizations as a result of cognitive offloading and de‑skilling.

By emphasizing quantity over quality output from early-career employees who haven’t yet internalized industry or company standards, organizations risk losing their future knowledge base, and with it the ability to grow, he says.

“AI only works if you know what you're looking for,” he says, adding that another challenge is the gray area for employees around if they are expected to produce more, or better, work because of AI integration.

“If AI is being added, is it actually benefiting the work, or is it putting the expectation that they're supposed to generate more work? This is one of the real tensions around AI change management,” McKelvey says.

“The counterpoint of that is that it seems as though AI … is actually being used to degrade the quality of the job.”

AI workslop and employee relationships

According to the research, employees were less inclined to work with a colleague who sent workslop, perceiving them to be less creative, capable and reliable; this shows up as more clarifying meetings, more verification loops, and fewer people willing to partner with the offender on the next project.

Kristina Rapuano, BetterUp Labs.

Senior Research Scientist at BatterUp Labs, Kristina Rapuano, explains how workslop essentially moves work along to other colleagues, impacting relationships and organizational stability.

“Workslop is what happens when employees use AI to offload work rather than as a collaborator to enhance workflows,” she says.

“Ultimately, AI-generated content looks polished on the surface, but lacks the depth, accuracy or context to be genuinely useful. It’s work that masquerades as complete, but ultimately pushes the burden onto the next person who has to decode, correct, or redo it.”

Blanket AI directives lead to workslop, turnover

The research cautions leaders against indiscriminate directives encouraging or mandating employees use AI everywhere – when organizations model poor discernment, employees respond in kind — copying and pasting outputs without context or checks: “That kind of blanket messaging models poor discernment, and employees follow suit.”

Turetken urges leaders to make ownership explicit at every level — and to model it by holding employees who create workslop accountable.

“At the end of the day, people still own their part of the work that is being done, and they should be responsible for it,” he says.

“Lead by example, by saying, ‘Look, when I screw up because I use genAI, it's on me to fix it, and likewise, I expect the same from each and every one of you.’”

Rapuano echoes this, explaining that leaders play a crucial role in preventing workslop from starting and spreading in a workplace.

"That’s where managers really come into play. Numerous studies show that these leaders’ emotions, attitudes, and behaviors are contagious for those who report to them," she says.

"AI is no exception ... When rolling out a new AI implementation from the top, leaders need to receive proper training and enablement to model intentional, purposeful AI use."

McKelvey adds that sensitivity to employee sentiment around AI use is crucial, as ignoring this element of implementation can lead to dissatisfaction and eventually, turnover.

"I think organizations have to be really mindful about both their employee satisfaction and making sure that when they're implementing AI, it's just not the expectation that they're going to be ... immediately more productive," McKelvey says.

"The ability of generating more information quickly doesn't actually mean more productivity."