With costs rising, employers should focus on AI outcomes, not usage, say experts

The latest hot topic coming out of the AI development race is exposing an uncomfortable reality: employers encouraging staff to embrace AI tools may be about to stumble onto a huge – and hidden – cost.

According to Canadian experts, "tokenmaxxing" – tech workers spending as many AI “tokens” as possible – is less a measure of productivity and more an encouragement to be wasteful.

Software developers at firms such as Meta and OpenAI are racing to spend tokens and competing on leaderboards. But according to Canadian experts in the field, employers tempted to incentivize employees the same way may want to think twice.

What is a token, and why does it cost money?

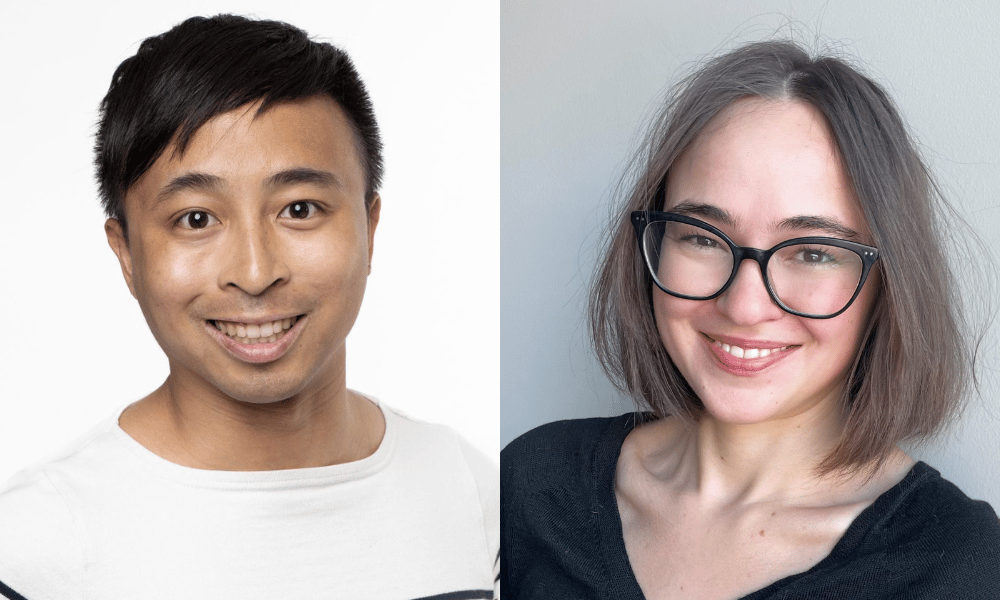

Before employers can evaluate any metric, they need to understand what it measures. Viet Vu, manager of economic research at The Dais at Toronto Metropolitan University, explains that tokens are the basic unit of how large language models (LLMs) process information.

"A token is just a bit of information – a letter, a text, a word, a sentence," Vu says.

"How intensively you use these tools can generally be understood by how many words you're putting into it, how many words it's putting out, how many lines of code it's producing, and all of that combined gets to this measure of token."

Companies such as Anthropic and OpenAI charge users based on token consumption, and Vu notes those costs have not been falling. This is bad news for companies that once encouraged unlimited AI use by employees as they may need to limit use to keep ballooning token costs down.

"The computing cost of receiving and processing and outputting each of these tokens has not been going down,” Vu says.

“It's, in fact, been going up because of the massive shortage in computing resources, infrastructure chips, memory chips, all of that."

Measuring cognitive work in knowledge workers

Vera Khovanskaya, assistant professor in the Faculty of Information at the University of Toronto, says the challenge of measuring cognitive work isn’t new.

When personal computers arrived in offices in the 1980s and 1990s, she says, companies faced a now-classic problem: they had invested heavily in new technology but didn’t know exactly how employees were using the tools – let alone how to measure it.

“People were spending all this money on PCs, but overall productivity seemed to kind of be going down,” says Khovanskaya.

“A lot of it was because no one knew what people were doing on those computers, because we hadn't developed a system for tracking office work."

What followed was what she calls an "empowerment and measurement regime.” To deal with the shift of knowledge work onto computing systems, and the resulting challenge to quantify that work, teams were decentralized and given the autonomy to plan their own work. New tracking systems were implemented to compensate for the loss of visibility on knowledge worker processes, which Khovanskaya says have yet to be perfected.

"The problem is that none of these measurements are that great, because the work happens in people's minds. You can't see it,” she says.

"We've been continuing to face this in decentralized organizations. You have to be close to the work to understand how to evaluate it.”

Measuring AI token use

Khovanskaya notes that for companies backed by venture capital, demonstrating high worker AI adoption and usage to investors may be a more pressing motivation than measuring output.

"No one has to believe that it's a good measurement of work,” she says. “But we could believe that it would be good for our company to demonstrate this.”

Vu agrees that token dashboards can serve a limited legitimate function, for now, in encouraging employees to use and experiment with AI. But performance measurement cannot be token-based, he asserts.

“The only thing that you're measuring, when you're measuring the amount of tokens that people use, is how intensively are they using AI tools. It says nothing about the quality of the work, nor the actual economic or business benefits that arise from the work,” he says.

“What leads to better productivity is how you smartly use these tools, and that's a lot harder to measure, because folks who use AI smartly may not use nearly as many tokens to accomplish the exact same set of tasks that someone using tons and tons more tokens would be doing.”

What to do instead: back to basics

For employers, the path forward is not a new metric; Khovanskaya says it’s about a return to fundamentals and a focus on managers who are close to the work. And, in planning performance assessment strategies, if AI usage is coming up as a metric, she strongly suggests HR leaders take a close look at where that line of thinking is coming from, and why.

"Be really clear about what you're measuring, why you're measuring it, where's that pressure coming from,” Khovanskaya says.

“Is it coming from your investors? Is it coming from someone in your own management who's really drunk the Kool-Aid?"

Vu puts it plainly, urging employers to understand that adoption and intensity of AI usage is not a “proxy for value.”

"Stick with the principle that we already know in human resource and talent management," Vu says.

"Stick on the actual outcomes, and the products that these people are producing, as the right source of measuring performance."

Khovanskaya points to one “slightly more generous” possible use case for token tracking: flagging employees who may need extra training if their token usage is high compared to their colleagues.

“Whose work process seems to be taking up outsized amounts of resources? What do we need to do to give that person more mentorship or guidance, such that they don't feel like they have to run everything through AI?” she says.

“That's a cry for help, for a person who's being over-responsibilized and under-mentored in the workplace. That might be interesting for HR.”