'You cannot bury your head in the sand — it's coming, it's already here'

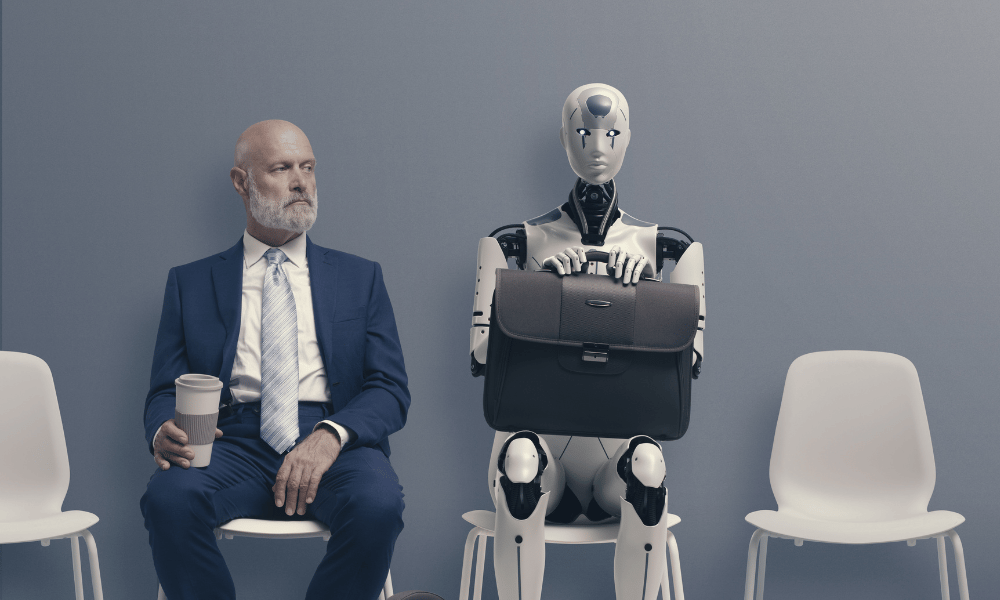

When it comes to ChatGPT, HR should be both worried and excited.

“But, more importantly, I think they should be prepared — prepared to embrace the reality of really smart artificial intelligence changing the way that we do business on a number of fronts,” says Joey Price, CEO and founder of Jumpstart HR.

“It’s something to have on your radar and to start getting familiar with, definitely… you cannot bury your head in the sand on this topic — it's coming, it's already here.”

The biggest danger? If an HR team is not even considering it, says Todd Mitchem, executive vice-president of learning design and development at AMP Learning and Development.

“We’ve got to really pay attention here, because AI is going to be a massive part of what we do,” he says, and the worst thing HR could do right now is try to put a “genie” like ChatGPT back in the bottle.

Game-changing tool

In dealing with ChatGPT, it’s about treating it like any other technological tool — even though it is a game-changer, says Mitchem. That means asking, “How do we leverage it so that it's useful?” versus considering it as something that is a detriment or misused.

And that means first understanding it, he says.

“You've got to first get your arms around what it is. So rather than act off of the fear of it, start to really understand ‘What is it?’… because a lot of people either read about it, or see a video about it, or they hear about it, but they don't take the time to really learn about what it's capable of, or what it isn't capable of. And that's when they get scared or nervous, or just trepidatious in general.”

HR should find people on their team who are really excited about AI, meaning they’re experimenting with it, using it and want to better understand it, says Mitchem, who is based in Denver. Then, it’s about working as a team to understand how these tools can be used and leveraged, such as greater efficiencies.

Finally, HR should articulate the findings to senior leadership, he says.

“Part of what HR has to do is say, ‘Hey, we've explored these tools, we've looked at some of them, here's how we're using ChatGPT, here's how we're not using it. Here's how we're using these other AI tools.”

HR executives need to understand how important this is not only for their department, but for their organization, says Price, who is based in Baltimore.

“And you need a level of familiarity with the tool to speak about it intelligently, with your other executive peers, and your CEO.”

Benefits to ChatGPT

There are, of course, many benefits to the powerful tool, with a wide range of possibilities for HR tasks such as: recruitment, workplace policies, training, performance reviews and employee surveys.

“It’s a cool tool,” says Price. “It can help eliminate — this is the exciting part... the creative energy spent on drafting a memo... It won't get you to 100 per cent — like you're not going to copy/paste — but I believe it's easier to edit a thing than to write it. So it can help free up your brain space when responding to questions or having to write short- or long-form content.”

Whether it's an email, an executive summary or it’s preparation for an agenda, a lot of time is spent doing things that are mission-critical but could be delegated or outsourced to a tool or service that gives us more margin in our days, he says.

“That's the exciting part of ChatGPT… it should give people more time back in the areas that we consistently run up against our margin of bandwidth.”

Mitchem has used the tool for revamping a training course on presentation skills. After putting the whole course into ChatGPT and asking what might be missing, “it pumped out three different things that we had not thought of,” he says.

“That blew our minds… it actually taught us something that we had missed. Now, it's just making suggestions — whether we take it or not is up to us — but it did make several suggestions that we had not considered. So I think it can be predictive in that sense.”

However, HR departments will also have to look inwards to decide if staffing levels should change, says Price.

“If we're cranking out responses to employees faster, do we go from a staff of five… to a staff of three?” he says. “Companies are looking to be lean, but at the same time, I think there's an ethical balance of using a tool like ChatGTP to free people's time up, but not to eliminate roles entirely.”

While plenty of organizations are eager to use new technologies to enhance and deliver learning and education for employees, little thought is given to the strategy, says one expert.

Limitations to ChatGPT at work

In evaluating the power of an AI tool like ChatGPT, it’s important to appreciate the limitations, which is also about guidelines, says Mitchem.

ChatGPT will write just about anything — from a blog to training materials to contracts — but bias or errors could still be at play, he says. And that’s why humans should still be involved.

“We've got to still look at the output — we can't just copy/paste and move on with life, because sometimes it may not be accurate. Or we may have prompted it incorrectly,” says Mitchem.

“If an HR person doesn't learn or doesn't have someone on their team that understands how this works, or doesn't hire someone to come into their team and say, ‘Hey, let's talk about some caution here, let's talk about where it fails, where it succeeds, what it's good at, what it isn't good at’… they will step into some dangerous territory, both legally and I think ethically; you could really go down a dark path very quickly because you don't know what you don't know.”

The more refined your queries, the better your answers are going to be, says Price.

“There are limitations there. I would never trust an artificial intelligence tool without peer reviewing and fact-checking it,” he says. “If we put in a good query, it will take us to about 70 per cent completion; but a standard user is probably looking at a document that's 30 to 40 per cent complete. And so there's still an editing process to it. It just helps you draft the material.”

That’s also why HR needs to be cautious, because many employees are undoubtedly using ChatGPT on the job already, says Mitchem.

“We should find out how many people are using it, and how they're using it, and how to help them use it better. I would approach it positively rather than negatively.”

Should ChatGPT be banned?

But that doesn’t mean HR should mandate that no one is allowed to use ChatGPT, he says.

“If you do that, what you're doing is, number one, you're going to ensure that people are going to do it secretly; and number two, you're not really paying attention — so you're not learning about it, which is going to put you at a deficit.”

At this point, you should assume that if people are using the tool to get a job, they’re also using it to do a job, says Price.

“We even have to re-evaluate our boundaries and perspective of… to use a sports analogy, what are the performance-enhancing tools that are allowable in corporate settings?”

Another concern? Feeding confidential data into the tool.

“You're dumping that into an open artificial intelligence platform that is learning from your data... It's not a closed-lock system of security,” says Mitchem.

“A lot of people don't realize that, that every time you dump something into ChatGPT, it’s learning; so if it's learning from your proprietary or your private information, that's really dangerous.”

That's another wrinkle, says Price.

“What about if someone knew that TD Bank was writing queries about how to do layoffs, or how to prepare a CEO speech to manage layoffs?... What could become of that? So I think that's more of the concern.”

The adoption of artificial intelligence (AI) has more than doubled since 2017, though the proportion of organizations using AI has plateaued between 50 and 60 percent for the past few years.

How will ChatGPT impact recruitment?

Then there’s the recruitment side of things. It’s likely that a growing number of people are using ChatGPT to fix up their resume or write up their cover letters; there are even tools that can scan content to make it sound less like it’s created by AI.

Should HR be worried? “One-thousand per cent,” says Price.

“The same challenge that academia is about to face is the same challenge that recruiters and evaluators of talent are going to face, and you have to be a little skeptical about if someone did truly complete the task on their own or if they used artificial intelligence to support their presentation.”

On the other hand, you also have HR departments using AI to screen cover letters for keywords, says Mitchem.

“Basically, what we've created is a scenario where in the recruitment space, theoretically, AI is basically reading AI — so we've taken the human element out. And that's a problem.”

In response, HR should “kill” the cover letter and no longer consider it because “it’s useless,” he says.

“How does the recruiter discern who's being human and who isn't?” he says. “I think recruiters are going to have the biggest struggle in the near term, because they're going to have to have a lot more human interaction. And the sheer volume of what they do — it’s going to make their lives more challenging.”

That’s where the human element will become more of a priority for HR, says Mitchem.

“I would try to get, as quick as possible, some discernment about good candidates, so I can get them on the phone or on Zoom or in person, because I've got to get the human factor at play faster,” he says.

Overall, 63 per cent of employers said they are planning to invest in AI hiring solutions this year — compared to 42 per cent in 2020, according to a report.

And when it comes to staffing around AI, HR teams should be really proactive, says Mitchem.

That means they may have to eliminate five jobs that can be handled by AI, for example, but create 10 other jobs, such as someone who can go and teach executives how to use AI, another to build training courses on how to leverage ChatGPT and other AI tools, and another who's an expert at AI and ethics.

“Lots of jobs are going to get created with the word ‘artificial’ or the letters ‘AI’ or the words ‘artificial intelligence’ in them.”