Use of AI software in recruitment raises questions about accuracy, bias

Just over a year ago, Amazon found itself uncomfortably in the spotlight when it abandoned an artificial intelligence (AI) recruitment tool that turned out to be biased against women because the system had basically taught itself that male candidates were preferable.

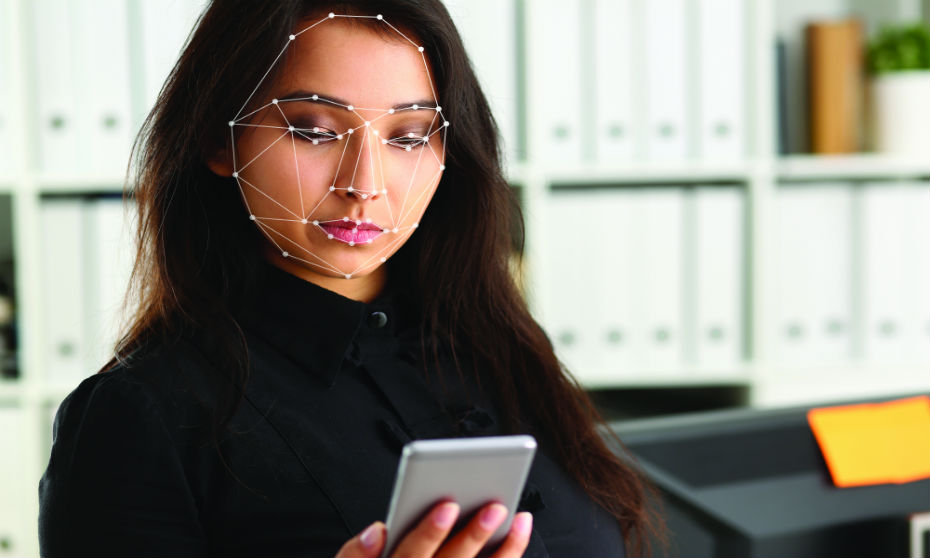

Fast forward 12 months and Unilever is now in the hot seat as news spread that the consumer goods giant is using facial scanning software in its quest for good candidates.

This particular technology analyzes the language, tone and facial expressions of people when they are videoed answering a set of job questions on their cellphone or laptop. Made by HireVue, the software includes game-based assessments to collect tens of thousands of data points that are inputted into predictive algorithms that determine a job candidate’s employability. It has been used for 100,000 interviews in the U.K., says HireVue, and worldwide, delivers one million interviews and more than 150,000 pre-hire assessments every 90 days.

But the path to robotic screening may not be going as smoothly as planned. For one, the Electronic Privacy Information Center (EPIC) in the United States recently filed an official complaint calling for the Federal Trade Commission to investigate HireVue, saying the company’s use of AI is an unfair and deceptive trade practice.

“The harms caused by HireVue’s use of biometric data and secret algorithms are not outweighed by countervailing benefits to consumers or to competition. HireVue has failed to demonstrate any legitimate purpose for the collection for job candidates’ biometric data or for the use of secret, unproven algorithms to assess the ‘cognitive ability, ‘psychological traits,’ ‘emotional intelligence’ and ‘social aptitudes’ of job candidates,” said the complaint.

Good and evil

AI can be used for good and for evil, says Arran Stewart, co-founder of Job.com in Newport Beach, Calif.

“Video interviewing and analyzing body language, sentiment analysis, keywords analysis, depending on how you use that, can be good or bad,” he says. “We’re in the very early embryonic stages, whether you believe it or not, of how artificial intelligence can help us during the recruiting process. And I feel like this is a learning lesson.”

Unilever is a smart company that knows the traits it’s looking for, and it would have analyzed video data from previous successful candidates, looking for trends and patterns within that, he says.

“I’m sure [HireVue has] used what we call an equal data set, which means there will be a good blend of males, females, racial backgrounds, class backgrounds, college backgrounds, all of those various different things in order to give people a fair shot.”

Plus, HireVue is not offering sentiment analysis — such as assessing whether a person is being dishonest — because that potentially could be a misuse of AI, says Stewart.

While Unilever knows this is not the final answer in the recruitment process, the AI software has saved hours’ worth of screening time and millions of dollars, and obviously the company has made successful hires, he says.

“It’s not perfect. But then neither is the hiring manager that’s interviewing perfect because you get personality clashes during the hiring process, you can get two completely conflicting people from different classes, races, backgrounds, whatever, who both meet over an interview, and they just don’t necessarily click... Nobody talks about that stuff. But that’s just as much of a challenge as any artificial intelligence system is.”

And, obviously, this process is more efficient, says Stewart.

“It used to take statistically a good recruiter six seconds to read a resumé. And then artificial intelligence came along and meant that you could analyze and match 600 resumés per three million jobs per second, which meant you could do it on a huge scale.”

The next stage to the technology is the interview process, he says.

“Instead of having to hire and filter through... 10 hires per hiring manager, now a hiring manager can do 30 or 40. And that’s the difference; it’s efficiencies.”

This kind of approach will also appeal to the younger generation, says Stewart.

“We’re used to tech, we live our lives through social; the whole world knows what we do from Facebook, Instagram, Snapchat, you name it,” he says. “This is very much built for our generation because, by 2025, 70 percent of the labour force will be millennial. So, we are the dominant labour force.”

‘Early days’ for AI and recruitment

When it comes to recruitment and AI and algorithms, “it’s ex-tremely early days,” says Jeff Aplin, president and CEO of the David Aplin Group in Calgary.

“I wouldn’t even consider it early-adopter phase, although the fact that some large employers are using it may be contrary to that comment.”

There will be a very slow adoption process when it comes to trusting robots in hiring people, and assessing people’s fit with a team, an organization’s core values and culture, he says.

“I’m skeptical for sure... there’s no question that some of the repetitive tasks in recruitment selection and talent management are... being replaced through artificial intelligence. But in terms of the actual hiring decision, I think it’s going to be quite a long time before we see hiring managers and employers in Canada trusting those kinds of systems.”

Having offered video interviews through his work, Aplin says hiring managers still like to meet up with candidates in person or have a live chat on Skype.

“When humans sit down with another human and have a conversation with them, they generally are more open-minded through that process to go ‘OK, ‘m seeing something here that maybe I wouldn’t have seen in my initial three- to five-second response to seeing them on screen.’”

But when it comes to an employer hiring for a large event over a short period, the AI approach could make sense, say Aplin.“

“You’ve got a lot of process upfront in the weeks leading up to that to screen people and hire them and onboard them and train them and orient them and all that stuff. So... technology can help accelerate those larger-volume hires where you’re dealing with a repetitive task.”

And there may be specific job categories where this could be effective, such as the hospitality industry, where it’s about “being particularly friendly and outgoing and approaching people at a table and engaging in conversation... and then selling to them that way,” he says.

“That might be some low-hanging fruit, for example. And so that kind of job category might be one that gets a bit more traction sooner.”

But when it comes to hiring for a more analytical or creative or leadership role, “I really do struggle with how they’re going to use the robots for that in the near future,” says Aplin. “Creative pursuits, problem-solving, analytical functions, I think those will be very difficult, at least in the short term, to replace by a simple video and asking robots to analyze their facial tics and movements and smile, ratios and stuff like that.”

And what if a candidate has a facial deformity, asks Aplin.

“How is that going to work because they might be fantastic at a role, but how adaptable and malleable will that AI algorithm be?” he says.

“Humans see the bigger picture; robots don’t generally see the bigger picture. And so, if you’re dealing with people with disabilities or who have facial deformities, a speech impediment, these are all people with great ability and great talent to hire into specific job categories. Do you want to risk not considering them fully because you’ve outsourced your decision-making to a robot? I wouldn’t.”

‘Not yet ready for prime time’

Jonathan Gratch, director for virtual humans research at the Institute for Creative Technologies in Playa Vista, Calif. agrees the technology is “not ready for prime time,” citing its limitations. While many companies might think it’s a cool approach that will attract interest, they probably haven’t thought it through very carefully, he says.

There’s also the concern about algorithmic bias, where these technologies aren’t trained uniformly on different races or ethnicities, says Gratch.

“You have to know what that algorithm has actually learned and be able to expect it. And in many cases, machine-learning algorithms are just a black box, you actually have no clue... My presumption would be the data they train this algorithm on is a human decided that this is a good or bad candidate. And so... if the people that made that decision are biased, then the algorithm is learning the same biases people have, and it’s not clear that it’s actually addressing that problem.”

And while the software may be able to capture facial expressions reasonably well in a controlled laboratory environment with good lighting, with the person looking straight at the camera, in a less controlled setting, it might not be as effective, says Gratch.

“When you light from above or below with harsh light and create shadows, that might look to the machine eye that someone has a certain facial expression which they’re not holding,” he says.

"If you’re wearing dark glasses, if your hair is over your eyebrows, if you have dark skin or... lots of wrinkles, they can make incorrect inferences. [And] even if you can infer what the facial expression is, taking from that to infer something about ‘Is this a good candidate?’ is somewhat problematic.”